13 March 2018: There is an updated post on this subject – https://www.sqlinthewild.co.za/index.php/2018/03/13/revisiting-catch-all-queries/

The query form that I refer to as ‘Catch-all’ typically results from search screens in the application where the user may enter any one (or more) of a number of optional parameters. One of the more common ways for such a query to be written in SQL is with multiple predicates in the where clause of the form (WHERE SomeColumn = @SomeVariable OR @SomeVariable IN NULL)

Now this does work, the problem is that it works fairly inefficiently and, on large tables, can result in really poor query performance. I’m going to take a look at why that is the case and what alternatives there are.

Erland Sommarskog has written on this as well, and in a lot more detail than I’m going to. His article on dynamic search conditions is well worth reading, as are the rest of his articles.

A typical example of a ‘catch-all’ query would be this one, based off a table in the AdventureWorks database.

CREATE PROCEDURE SearchHistory

(@Product int = NULL, @OrderID int = NULL, @TransactionType char(1) = NULL, @Qty int = NULL)

AS

SELECT ProductID, ReferenceOrderID, TransactionType, Quantity,

TransactionDate, ActualCost from Production.TransactionHistory

WHERE (ProductID = @Product Or @Product IS NULL)

AND (ReferenceOrderID = @OrderID OR @OrderID Is NULL)

AND (TransactionType = @TransactionType OR @TransactionType Is NULL)

AND (Quantity = @Qty Or @Qty is null)

GO

Now, let’s say that I run that query and pass values for the ProductID and the Transaction type. Let’s further say that there’s a nonclustered index (called idx_TranHistory_TranTypeProductID) on those two columns.

EXEC SearchHistory @Product = 978, @TransactionType = 'W'

Now this returns 52 rows out of 980000 that are in the table, so we’d expect that SQL would use an index seek operation on that index, followed by a bookmark lookup.

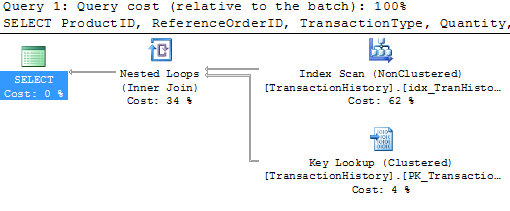

Nope. It’s using that index all right, but it’s doing a scan, not a seek. Ok, not great, but not bad. Let me try a different set of parameters

EXEC SearchHistory @Qty = 100

The plan’s exactly the same. No surprise, it was cached the first time and then reused. There’s a problem here though, the index that’s used is completely inappropriate and there’s a bookmark lookup that ran almost a million times. No wonder this execution took 3 seconds and 2,949,715 IOs to return 29 rows.

Ok, so let me try a different form of the catch-all query

CREATE PROCEDURE SearchHistory_Improved

(@Product int = NULL, @OrderID int = NULL, @TransactionType char(1) = NULL, @Qty int = NULL)

AS

SELECT ProductID, ReferenceOrderID, TransactionType, Quantity, TransactionDate, ActualCost from Production.TransactionHistory

WHERE (ProductID = CASE WHEN @Product IS NULL THEN ProductID ELSE @Product END)

AND (ReferenceOrderID = CASE WHEN @OrderID IS NULL THEN ReferenceOrderID ELSE @OrderID END)

AND (TransactionType = CASE WHEN @TransactionType IS NULL THEN TransactionType ELSE @TransactionType END)

AND (Quantity = CASE WHEN @Qty IS NULL THEN Quantity ELSE @Qty END)

GO

Let’s see what that does for the first test:

EXEC SearchHistory_Improved @Product = 978, @TransactionType = 'W'

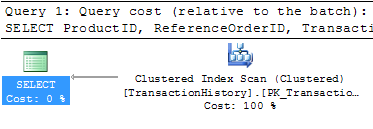

Well that’s no better. Full blown table scan.

The problem with these types of queries is that there is no stable plan. The optimal plan differs completely depending on what paramters are passed. The optimiser can tell that and it plays safe. It creates plans that will always work. That’s (one of the reasons) why in the first example it was an index scan, not an index seek.

The downside of the safe plan is that it’s highly unlikely to be a good plan and, even if it is, it won’t be good for all possible combinations of plans.

So, how to handle this type of query? Well, there are typically two ways.

Recompile

This is only an option on SQL 2008. On 2008, if the query is specified with the OPTION (RECOMPILE) hint, then the optimiser knows it doesn’t have to worry about safe plans because the plan will never be reused. In fact, if I add that hint to the query in the first example, I get the expected index seek.

Dynamic SQL

The other option is to build up the query string dynamically, based on the parameters passed and then to use sp_executesql to run it. There are the ususal downsides to dynamic SQL but, it may be that the performance improvement is worth it.

CREATE PROCEDURE SearchHistory_Dynamic (@Product int = NULL, @OrderID int = NULL, @TransactionType char(1) = NULL, @Qty int = NULL)

AS

DECLARE @sSQL NVARCHAR(2000), @Where NVARCHAR(1000) = ''

SET @sSQL = 'SELECT ProductID, ReferenceOrderID, TransactionType, Quantity, TransactionDate, ActualCost

from Production.TransactionHistory '

IF @Product is not null

SET @Where = @Where + 'AND ProductID = @_Product '

IF @OrderID is not null

SET @Where = @Where + 'AND ReferenceOrderID = @_OrderID '

IF @TransactionType IS NOT NULL

SET @Where = @Where + 'AND TransactionType = @_TransactionType '

IF @Qty IS NOT NULL

SET @Where = @Where + 'AND Quantity = @_Qty '

IF LEN(@Where) > 0

SET @sSQL = @sSQL + 'WHERE ' + RIGHT(@Where, LEN(@Where)-3)

EXEC sp_executesql @sSQL,

N'@_Product int, @_OrderID int, @_TransactionType char(1), @_Qty int',

@_Product = @Product, @_OrderID = @OrderID, @_TransactionType = @TransactionType, @_Qty = @Qty

GO

Note that there’s no SQL injection vulnerability in this. The parameters are never concatenated into the string and the execution is parametrised.

Now each different set of parameters gets a different cached plan, optimal for that particular set of parameters.

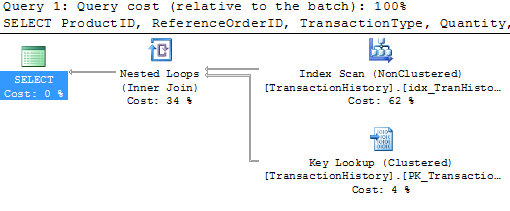

EXEC SearchHistory_Dynamic @Product = 978, @TransactionType = 'W'

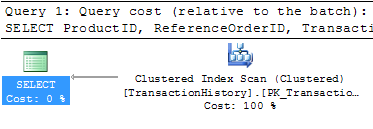

EXEC SearchHistory_Dynamic @Qty = 100

The first gets an index seek, the second a clustered index scan (because there’s no index on Quantity). Much better than the behaviour with the earlier non-dynamic versions.