SQL University: Advanced Indexing – Filtered Indexes

Welcome back to day 2 of Advanced Indexing. Today we’re going to look at a feature that was added in SQL Server 2008 – filtered indexes.

In versions previous, indexes were always on the entire table. An index would always have the same number of rows as the table it was built on did (which is why COUNT(*) can just scan the smallest index on the table)

With filtered indexes, it’s possible to have an index that’s built on a subset of the rows in the table. The definition for a filtered index contains a WHERE clause predicate that determines if a row in the table will be in the index or not.

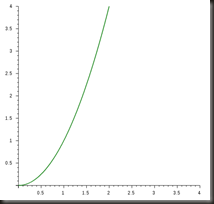

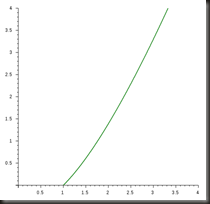

This can be a major advantage on really large tables where most queries are only interested in a small fraction of the table. A normal index would be based on the entire table regardless of the fact that most of the table is of no interest, meaning the index would be larger than necessary, deeper than necessary and take up more space than would be ideal. With a filtered index on just the interesting portion of the table, the index size is kept to a minimum, meaning it’s shallower than an index on the entire table and hence more efficient.

A simple example of a filtered index would be

CREATE NONCLUSTERED INDEX idx_Example ON Account (AccountNumber) WHERE Active = 1;

There are two main uses of a filtered index:

1) Enforcing moderately complex uniqueness requirements

2) Supporting queries that filter on common subsets of a table

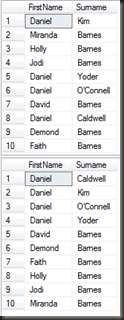

Filtered indexes and unique columns

One very interesting use of filtered indexes is in enforcing uniqueness over portions of a table. One requirement that come up again and again is to have a nullable column that must have unique entries in it, but whose entries are optional. Basically, the column must be unique or null. Sounds easy, but the problem is that a unique index allows only one null. So much for nulls not being equal to anything including other nulls.

Prior to SQL 2008 implementing such a constraint required computed columns, indexed views or triggers. With SQL 2008’s filtered indexes, it’s trivial.