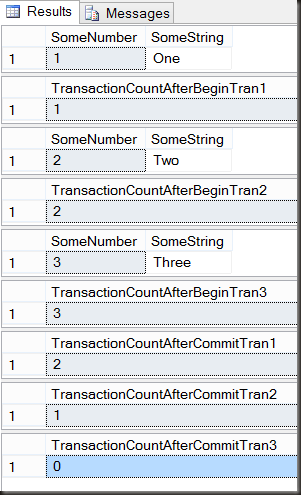

This is the second in a short series on transactions. In the previous part I discussed nested transactions and showed that they actually don’t exist. SQL has syntax which may suggest that nested transactions exist, but it’s just a syntactical lie.

One thing I suggested in that post was that transactions should be started and committed (or rolled back) at one level only.

That’s a nice idea, but it often doesn’t work when dealing with existing systems which may have been developed with less attention to transactions than ideal. What happens when a stored procedure needs to run in a transaction and potentially roll back its changes, but can be called either within an existing transaction or independently?

The answer to that comes in two parts, first the conditional starting of a transaction and secondly save points.

I should mention that this is fairly advanced transaction management, it’s not something I see in production code very often. It should be considered carefully before being used, as if the people supporting the code don’t understand this, there could be trouble.

Let’s start with some setup. Some random tables and a stored procedure which inserts into parent and child tables.

CREATE TABLE Parent (

SomeID INT IDENTITY PRIMARY KEY,

SomeRandomValue VARCHAR(50),

NotificationRequired BIT DEFAULT 0

);

CREATE TABLE Child (

SomeOtherID INT IDENTITY PRIMARY KEY,

ParentID INT,

State INT,

SomeValue INT

);

CREATE TABLE Notifications (

ParentID INT,

NotificationText VARCHAR(1000),

NotificationDate DATETIME DEFAULT GETDATE()

);

Let’s say an outer procedure which may insert into the parent table, inserts into the child table and then calls another procedure. The other procedure inserts a notification into a table.

CREATE PROCEDURE OuterProc (@SomeValue VARCHAR(50), @SomeOtherValue INT)

AS

DECLARE @ParentID INT;

BEGIN TRY

BEGIN TRANSACTION

IF NOT EXISTS ( SELECT 1

FROM dbo.Parent

WHERE SomeRandomValue = @SomeValue )

BEGIN

INSERT INTO dbo.Parent

(SomeRandomValue)

VALUES (@SomeValue);

SELECT @ParentID = @@IDENTITY;

END

ELSE

SELECT @ParentID = SomeID

FROM dbo.Parent

WHERE SomeRandomValue = @SomeValue;

INSERT INTO dbo.Child

(ParentID, SomeValue)

VALUES (@ParentID, @SomeOtherValue);

UPDATE dbo.Parent

SET NotificationRequired = 1

WHERE SomeID = @ParentID;

EXEC NotificationProc @ParentID;

COMMIT TRANSACTION

END TRY

BEGIN CATCH

ROLLBACK TRANSACTION;

-- other error handling here

END CATCH

GO

There are some potential race conditions in there, but let’s ignore those for now, it’s not the point of this post.

The requirement for the notification procedure is that if it throws an error all of its changes must be undone however the changes in the outer procedure must still commit. The notification procedure can be called independently or from within the OuterProc

The starting code for the notification procedure is:

CREATE PROCEDURE NotificationProc (@AnID INT)

AS

UPDATE dbo.Parent

SET NotificationRequired = 0

WHERE SomeID = @AnID

INSERT INTO dbo.Notifications

(ParentID,

NotificationText,

NotificationDate

)

SELECT SomeID,

SomeRandomValue,

GETDATE()

FROM dbo.Parent

WHERE SomeID = @AnID;

GO

This currently has no transaction management at all. Let’s start by implementing the first requirement, if called from outside of any transaction, it must begin a transaction and either commit or rollback all changes.

To do this, we can check the value of @@Trancount to see whether a transaction should be started or not.

CREATE PROCEDURE NotificationProc (@AnID INT)

AS

DECLARE @Independent BIT = 0;

IF @@TRANCOUNT = 0 -- proc called from outside of any transaction

SET @Independent = 1;

BEGIN TRY

IF @Independent = 1

BEGIN TRANSACTION;

UPDATE dbo.Parent

SET NotificationRequired = 0

WHERE SomeID = @AnID;

INSERT INTO dbo.Notifications

(ParentID,

NotificationText,

NotificationDate

)

SELECT SomeID,

SomeRandomValue,

GETDATE()

FROM dbo.Parent

WHERE SomeID = @AnID;

IF @Independent = 1

COMMIT TRANSACTION;

END TRY

BEGIN CATCH

IF @Independent = 1

ROLLBACK TRANSACTION;

THROW;

END CATCH;

GO

The THROW is there so that any error can be passed up to the caller to be logged or otherwise handled. It also ensures that it fires any catch block in the calling code.

That’s the first half, the transaction handling if called independently, but it doesn’t help with the second requirement, that the changes made in the notification procedure roll back if there’s an error, but leave the changes made in the calling procedure unaffected and the transaction open. To do that, we need a savepoint.

From Books Online:

A user can set a savepoint, or marker, within a transaction. The savepoint defines a location to which a transaction can return if part of the transaction is conditionally canceled. If a transaction is rolled back to a savepoint, it must proceed to completion with more Transact-SQL statements if needed and a COMMIT TRANSACTION statement, or it must be canceled altogether by rolling the transaction back to its beginning.

It’s not a nested transaction, it’s not like Oracle’s autonomous transactions, it’s just a point within the transaction to which we can roll back to. It lets us undo the most recent part of a transaction.

ALTER PROCEDURE NotificationProc (@AnID INT)

AS

DECLARE @Independent BIT = 0;

IF @@TRANCOUNT = 0 -- proc is being called from outside of any transaction

SET @Independent = 1;

BEGIN TRY

IF @Independent = 1

BEGIN TRANSACTION;

ELSE

SAVE TRANSACTION Notifications; -- Define a savepoint which we can roll the transaction back to.

UPDATE dbo.Parent

SET NotificationRequired = 0

WHERE SomeID = @AnID;

INSERT INTO dbo.Notifications

(ParentID,

NotificationText,

NotificationDate

)

SELECT SomeID,

SomeRandomValue,

GETDATE()

FROM dbo.Parent

WHERE SomeID = @AnID;

IF @Independent = 1

COMMIT TRANSACTION;

END TRY

BEGIN CATCH

IF @Independent = 1

ROLLBACK TRANSACTION; -- roll back the entire transaction

ELSE

ROLLBACK TRANSACTION Notifications; -- roll back to the save point

SELECT ERROR_NUMBER(),

ERROR_MESSAGE(),

ERROR_LINE(); -- In reality, log this somewhere

END CATCH;

GO

Looks good, let’s test. To test, I’m going to add a check constraint to the Notifications table that will be violated by the insert in the Notification procedure. This is to simulate the kind of errors that can happen in a real system (key violations, data type conversion failures, check constraint violations, etc). I’ll also add some sample data to the Parent table.

ALTER TABLE Notifications ADD CONSTRAINT Testing CHECK (NotificationDate > '2020-01-01')

INSERT INTO dbo.Parent (SomeRandomValue, NotificationRequired)

VALUES ('abc',0), ('def',1),('ghi',0),('jkl',1),('mno',1);

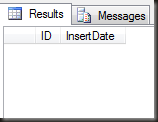

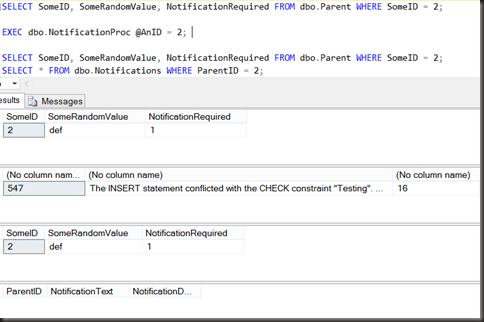

First test, outside of a transaction. The requirement is that neither of the changes in the proc remain after the execution (because of the error)

SELECT SomeID, SomeRandomValue, NotificationRequired FROM dbo.Parent WHERE SomeID = 2;

EXEC dbo.NotificationProc @AnID = 2;

SELECT SomeID, SomeRandomValue, NotificationRequired FROM dbo.Parent WHERE SomeID = 2;

SELECT * FROM dbo.Notifications WHERE ParentID = 2;

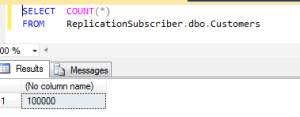

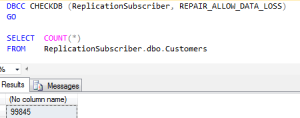

Success. When run independently the two data modifications were run in a transaction and when the error occurred, were rolled back.

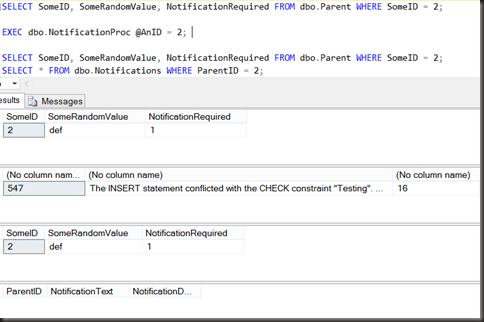

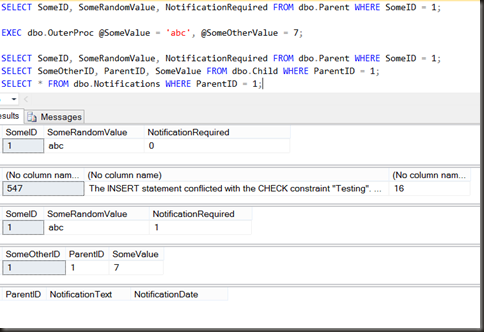

Now let’s try from within an existing transaction. The changes made in the outer procedure (insert of a row into Child and update NotificationRequired to 1 in Parent must commit, but the changes made in the inner proc must not)

SELECT SomeID, SomeRandomValue, NotificationRequired FROM dbo.Parent WHERE SomeID = 1;

EXEC dbo.OuterProc @SomeValue = 'abc', @SomeOtherValue = 7;

SELECT SomeID, SomeRandomValue, NotificationRequired FROM dbo.Parent WHERE SomeID = 1;

SELECT SomeOtherID, ParentID, SomeValue FROM dbo.Child WHERE ParentID = 1;

SELECT * FROM dbo.Notifications WHERE ParentID = 1;

Again, exactly the desired behaviour. The changes made in the outer procedure were committed, the changes in the inner procedure, the procedure where the error was thrown, were rolled back.

Used correctly, savepoints can be a powerful mechanism for managing transactions in SQL Server. Unfortunately they’re not well known and as such their use can also make code much harder for later developers to debug.

The next part of the series on transactions will look at why (and why not) you’d want to name a transaction.

The full list is:

- A Mess of Nested Transactions

- Savepoints and conditional transactions (This post)

- Why would you want to name a transaction?

- When naming transactions causes an error